| Inference Time | Cold Start Time | Token/Sec | Latency/Token | VRAM Required |

|---|---|---|---|---|

| 11.96 secs | 7.82 secs | 21.34 | 46.85 ms | 1.72 GB |

Why finetuning?

Fine-tuning base language models, especially with techniques like Reinforcement Learning from Human Feedback (RLHF), is crucial for several reasons. RLHF allows the incorporation of human feedback to enhance the model’s performance, creating custom, task-specific, and expert models. The process involves setting up a good starting point, collecting human feedback, and iteratively improving the model. For this tutorial, we will use an RLHF-like technique known as Direct Preference Optimization (DPO). This technique aligns with human preferences better than existing methods, and it offers a promising alternative to RLHF for fine-tuning language models to meet specific human preferences.Let’s get started:

Installing the Required Libraries

You need the following libraries for fine-tuning Phi-2 model using DPO.Dataset for DPO

The DPO trainer required a very specific type of dataset comprising instances of preferred and rejected responses in relation to a prompts. The preference dataset follows a defined format:- Prompt: It is the context prompt provided to the model during inference. It serves as the input for the text generation process.

- Chosen: The “chosen” key holds the information about the preferred generated response corresponding to the given prompt.

- Rejected: The “rejected” key contains information about a response that is not preferred or should be avoided when generating text in response to the provided prompt.

Finetuning the Phi-2 model with DPO

Once you are done with the formatting of the dataset, you are now ready for the finetuning. DPO requires two models, the model that you want to finetune (Phi-2) and a reference model. Now load the tokenizer and the model then quantize and prepare the model for finetuning in 4bit using bitsandbytes.Quantize and Inference

Loading the finetuned model required 5.19 GB of VRAM. So, we will quantize and load the model to further reduce the memory requirement. Bitsandbytes enable you to load the model in 4 bits and further reduce the memory requirements to 1.72 GB. Here are our observations:| Inference Time | Cold Start Time | Token/Sec | Latency/Token | VRAM Required |

|---|---|---|---|---|

| 11.96 secs | 7.82 secs | 21.34 | 46.85 ms | 1.72 GB |

Last step, Tutorial to deploy on Inferless

Constructing the GitHub/GitLab Template

Now quickly construct the GitHub/GitLab template, this process is mandatory and make sure you don’t add any file namedmodel.py

Create the class for inference

In the app.py we will define the class and import all the required functions-

def initialize: In this function, you will initialize your model and define anyvariablethat you want to use during inference. -

def infer: This function gets called for every request that you send. Here you can define all the steps that are required for the inference. You can also pass custom values for inference and pass it throughinputs(dict)parameters. -

def finalize: This function cleans up all the allocated memory.

Creating the Custom Runtime

This is a mandatory step where we allow the users to upload their custom runtime through inferless-runtime-config.yaml.Method A: Deploying the model on Inferless Platform

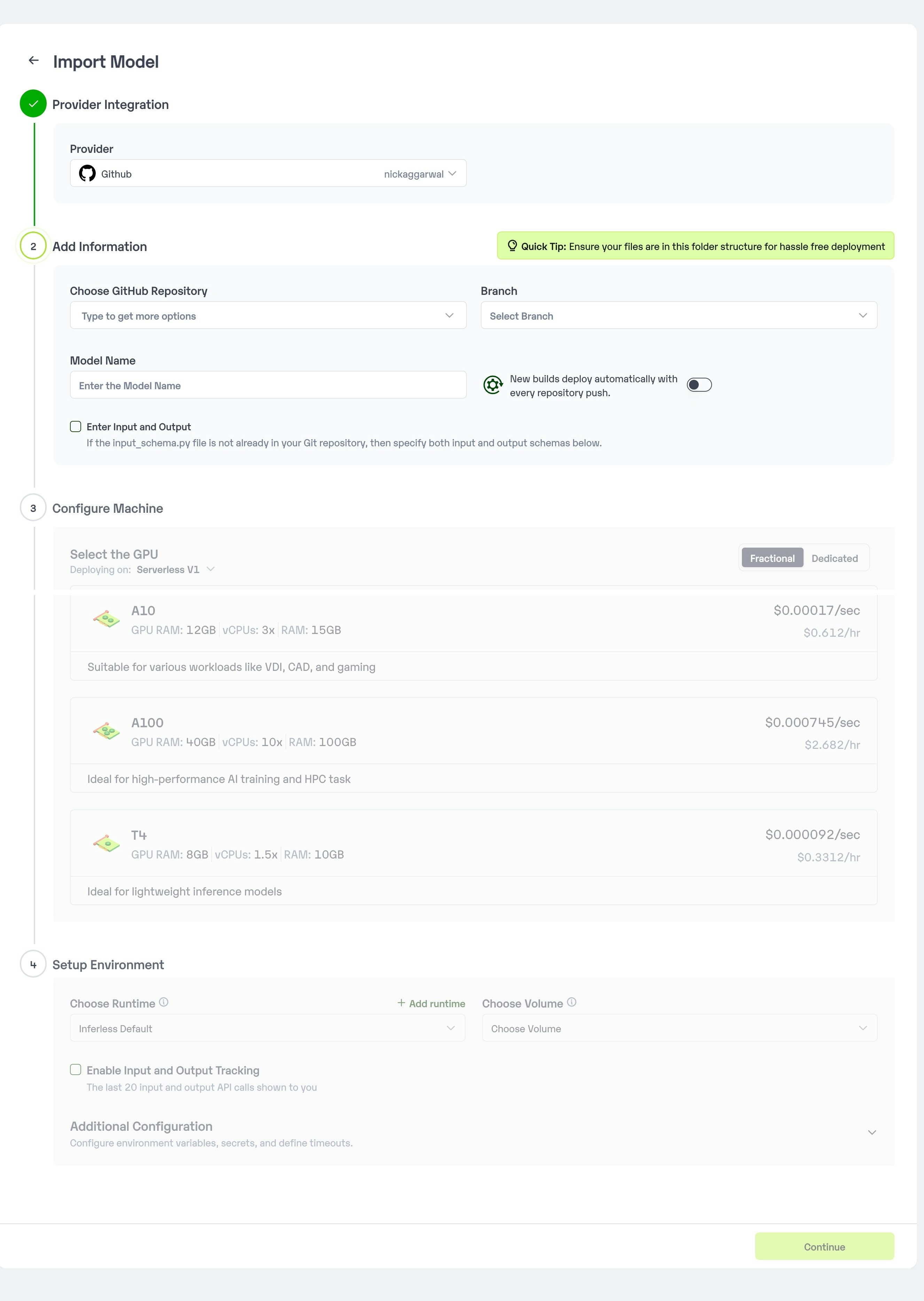

Inferless supports multiple ways of importing your model. For this tutorial, we will use GitHub.Step 1: Login to the inferless dashboard can click on Import model button

Navigate to your desired workspace in Inferless and Click onAdd a custom model button that you see on the top right. An import wizard will open up.

Step 2: Follow the UI to complete the model Import

- Select the GitHub/GitLab Integration option to connect your source code repository with the deployment environment.

- Navigate to the specific GitHub repository that contains your model’s code. Here, you will need to identify and enter the name of the model you wish to import.

- Choose the appropriate type of machine that suits your model’s requirements. Additionally, specify the minimum and maximum number of replicas to define the scalability range for deploying your model.

- Optionally, you have the option to enable automatic build and deployment. This feature triggers a new deployment automatically whenever there is a new code push to your repository.

- If your model requires additional software packages, configure the Custom Runtime settings by including necessary pip or apt packages. Also, set up environment variables such as Inference Timeout, Container Concurrency, and Scale Down Timeout to tailor the runtime environment according to your needs.

- Wait for the validation process to complete, ensuring that all settings are correct and functional. Once validation is successful, click on the “Import” button to finalize the import of your model.

Step 3: Wait for the model build to complete usually takes ~5-10 minutes

Step 4: Use the APIs to call the model

Once the model is in ‘Active’ status you can click on the ‘API’ page to call the modelHere is the Demo:

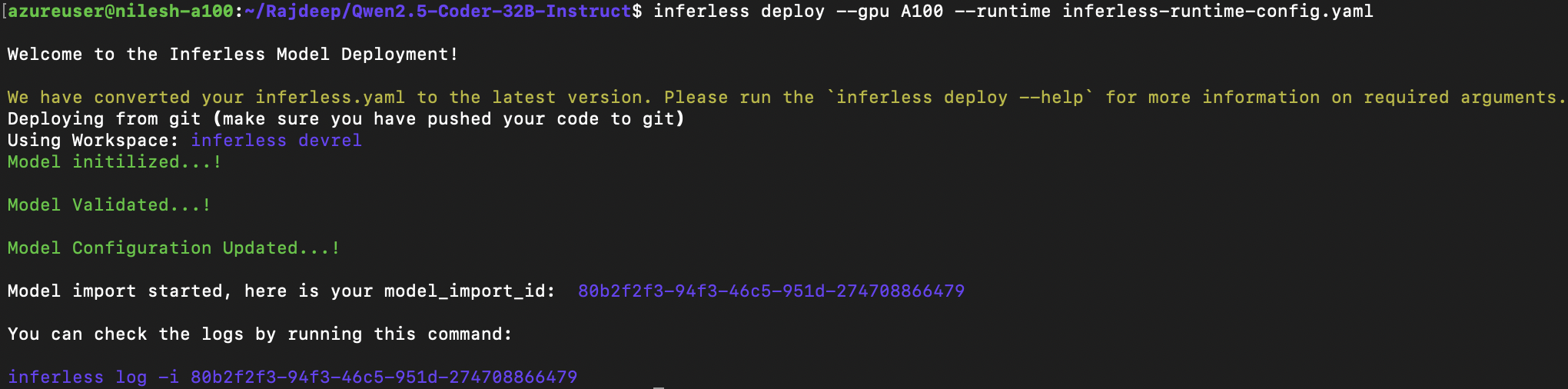

Method B: Deploying the model on Inferless CLI

Inferless allows you to deploy your model using Inferless-CLI. Follow the steps to deploy using Inferless CLI.Clone the repository of the model

Let’s begin by cloning the model repository:Deploy the Model

To deploy the model using Inferless CLI, execute the following command:--gpu A100: Specifies the GPU type for deployment. Available options includeA10,A100, andT4.--runtime inferless-runtime-config.yaml: Defines the runtime configuration file. If not specified, the default Inferless runtime is used.