Documentation Index

Fetch the complete documentation index at: https://docs.inferless.com/llms.txt

Use this file to discover all available pages before exploring further.

Introduction

FLUX.1-schnell, developed by Black Forest Labs, is the fastest model in the FLUX.1 suite of text-to-image generation models. It offers an impressive balance of speed and quality, outperforming many competitors including some non-distilled models. Designed for local development and personal use, FLUX.1-schnell is openly available under an Apache 2.0 license. It utilizes a hybrid architecture of multimodal and parallel diffusion transformer blocks, scaled to 12B parameters, and supports a wide range of aspect ratios and resolutions.Our Observations

We have deployed this model using A100 GPU and observed that the model took an average cold start time of11.60 sec and an average inference time of 0.67 sec for image generation.

Defining Dependencies

We are using the HuggingFace Diffusers library for the deployment.Constructing the GitHub/GitLab Template

Now quickly construct the GitHub/GitLab template, this process is mandatory and make sure you don’t add any file namedmodel.py

Create the class for inference

In the app.py we will define the class and import all the required functions-

def initialize: In this function, you will initialize your model and define anyvariablethat you want to use during inference. -

def infer: This function gets called for every request that you send. Here you can define all the steps that are required for the inference. You can also pass custom values for inference through theinputsparameter. -

def finalize: This function cleans up all the allocated memory.

Create the Input Schema

We have to create ainput_schema.py in the GitHub/Gitlab repository this will help us create the Input parameters. You can checkout our documentation on Input / Output Schema.

For this tutorial, we have defined four parameters prompt,height, width, num_inference_steps, guidance_scale and max_sequence_length which are required during the API call. Now lets create the input_schema.py.

Creating the Custom Runtime

This is a mandatory step where we allow the users to upload their own custom runtime through inferless-runtime-config.yaml.Test your model with Remote Run

You can use theinferless remote-run(installation guide here) command to test your model or any custom Python script in a remote GPU environment directly from your local machine. Make sure that you use Python3.10 for seamless experience.

Step 1: Add the Decorators and local entry point

To enable Remote Run, simply do the following:- Import the

inferlesslibrary and initializeCls(gpu="A100"). The available GPU options areT4,A10andA100. - Decorated the

initializeandinferfunctions with@app.loadand@app.inferrespectively. - Create the Local Entry Point by decorating a function (for example,

my_local_entry) with@inferless.local_entry_point. Within this function, instantiate your model class, convert any incoming parameters into aRequestObjectsobject, and invoke the model’sinfermethod.

Step 2: Run with Remote GPU

From your local terminal, navigate to the folder containing yourapp.py and your inferless-runtime-config.yaml and run:

--height, --width, etc.) as long as your code expects them in the inputs dictionary.

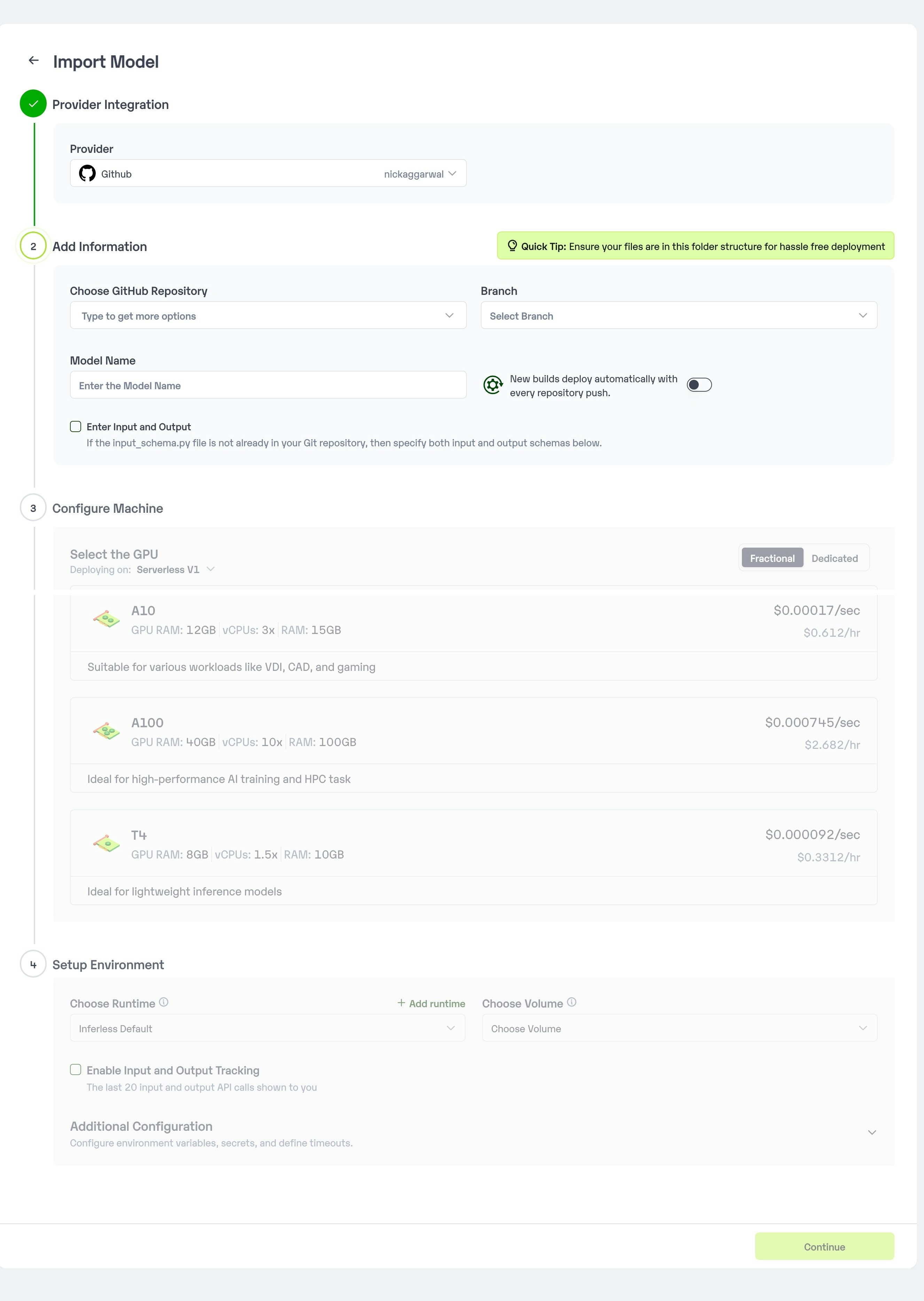

Method A: Deploying the model on Inferless Platform

Inferless supports multiple ways of importing your model. For this tutorial, we will use GitHub.Step 1: Login to the inferless dashboard can click on Import model button

Navigate to your desired workspace in Inferless and Click onAdd a custom model button that you see on the top right. An import wizard will open up.

Step 2: Follow the UI to complete the model Import

- Select the GitHub/GitLab Integration option to connect your source code repository with the deployment environment.

- Navigate to the specific GitHub repository that contains your model’s code. Here, you will need to identify and enter the name of the model you wish to import.

- Choose the appropriate type of machine that suits your model’s requirements. Additionally, specify the minimum and maximum number of replicas to define the scalability range for deploying your model.

- Optionally, you have the option to enable automatic build and deployment. This feature triggers a new deployment automatically whenever there is a new code push to your repository.

- If your model requires additional software packages, configure the Custom Runtime settings by including necessary pip or apt packages. Also, set up environment variables such as Inference Timeout, Container Concurrency, and Scale Down Timeout to tailor the runtime environment according to your needs.

- Wait for the validation process to complete, ensuring that all settings are correct and functional. Once validation is successful, click on the “Import” button to finalize the import of your model.

Step 3: Wait for the model build to complete usually takes ~5-10 minutes

Step 4: Use the APIs to call the model

Once the model is in ‘Active’ status you can click on the ‘API’ page to call the modelHere is the Demo:

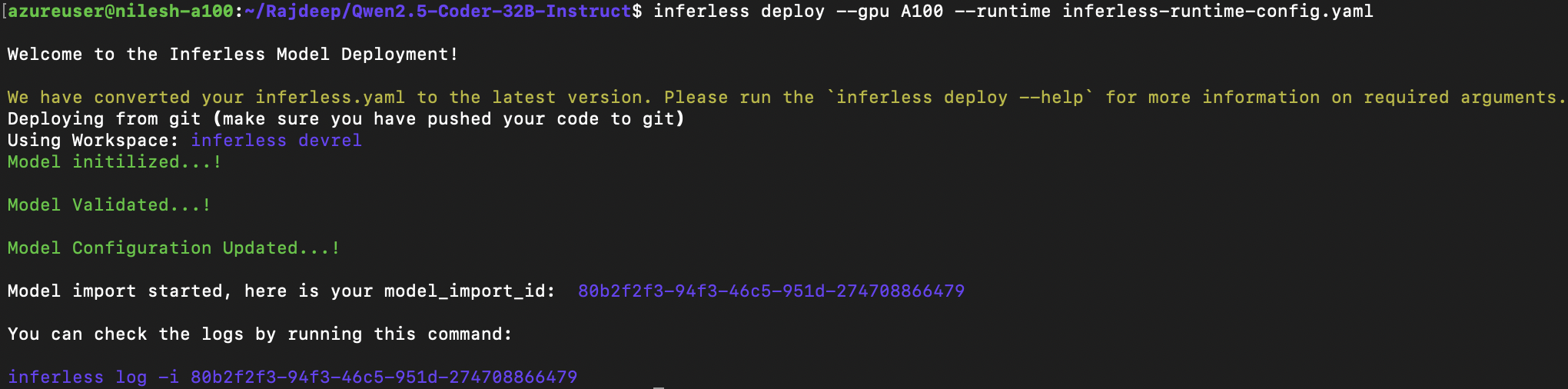

Method B: Deploying the model on Inferless CLI

Inferless allows you to deploy your model using Inferless-CLI. Follow the steps to deploy using Inferless CLI.Clone the repository of the model

Let’s begin by cloning the model repository:Deploy the Model

To deploy the model using Inferless CLI, execute the following command:--gpu A100: Specifies the GPU type for deployment. Available options includeA10,A100, andT4.--runtime inferless-runtime-config.yaml: Defines the runtime configuration file. If not specified, the default Inferless runtime is used.