Documentation Index

Fetch the complete documentation index at: https://docs.inferless.com/llms.txt

Use this file to discover all available pages before exploring further.

Key Components of the Application

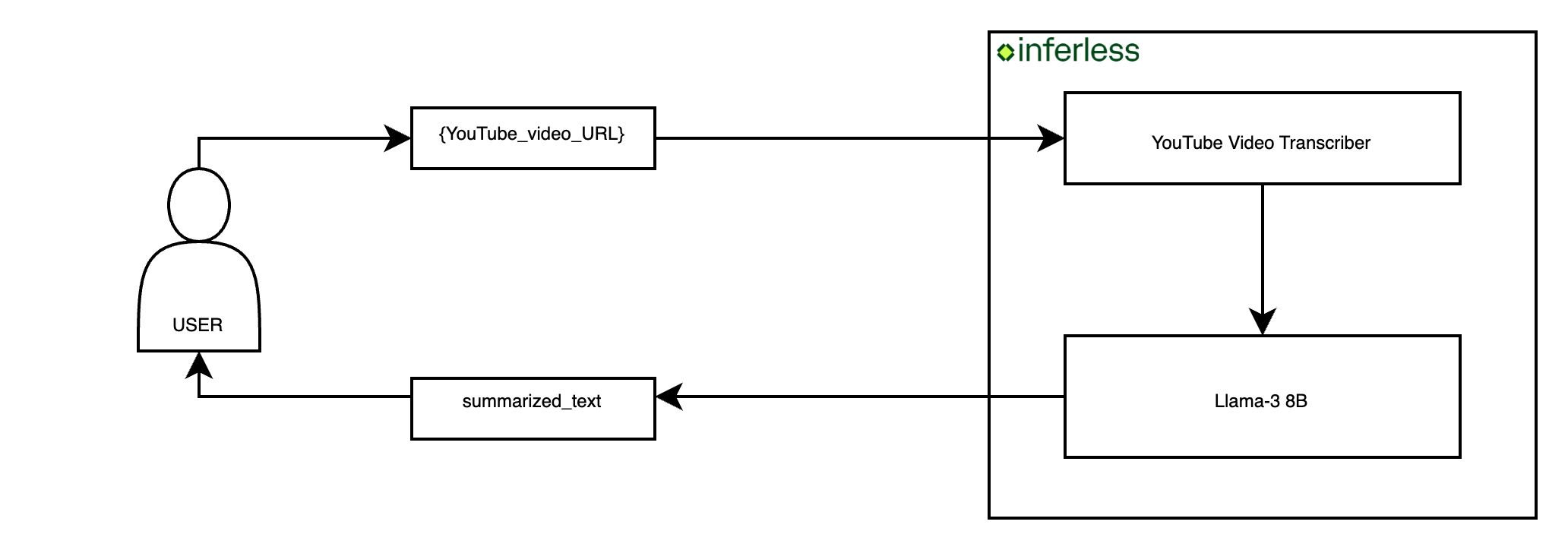

To build this application we will use these components:- YouTube Video Transcriber: This is required as to get the transcription of a given YouTube video. We will use youtube-transcript-api.

- Text Summarization Model: This model will summarize the transcribe text from the vidoe. We will use a fine-tuned Llama-3 8B model.

Crafting Your Application

This tutorial guides you through the creation process of a YouTube video summarizer application.

Core Development Steps

- Objective: Accept the YouTube video url as a input and generate a summary.

- Action: Implement a Python class (InferlessPythonModel) that handles the entire process, including input handling, transcription, and summary generation.

Setting up the Environment

Dependencies:- Objective: Ensure all necessary libraries are installed.

- Action: Run the command below to install dependencies:

Deploying Your Model with Inferless CLI

Inferless allows you to deploy your model using Inferless-CLI. Follow the steps to deploy using Inferless CLI.Clone the repository of the model

Let’s begin by cloning the model repository:Deploy the Model

To deploy the model using Inferless CLI, execute the following command:--gpu A100: Specifies the GPU type for deployment. Available options includeA10,A100, andT4.--runtime inferless-runtime-config.yaml: Defines the runtime configuration file. If not specified, the default Inferless runtime is used.

Demo of the YouTube Video Summarizer.

Alternative Deployment Method

Inferless also supports a user-friendly UI for model deployment, catering to users at all skill levels. Refer to Inferless’s documentation for guidance on UI-based deployment.Choosing Inferless for Deployment

Deploying your YouTube Video Summarizer with Inferless offers compelling advantages, making your development journey smoother and more cost-effective. Here’s why Inferless is the go-to choice:- Ease of Use: Forget the complexities of infrastructure management. With Inferless, you simply bring your model, and within minutes, you have a working endpoint. Deployment is hassle-free, without the need for in-depth knowledge of scaling or infrastructure maintenance.

- Cold-start Times: Inferless’s unique load balancing ensures faster cold-starts. Expect around 13.42 seconds to process each queries, significantly faster than many traditional platforms.

- Cost Efficiency: Inferless optimizes resource utilization, translating to lower operational costs. Here’s a simplified cost comparison:

Scenario 1

You are looking to deploy a YouTube Video Summarizer for processing 100 queries.Parameters:

- Total number of queries: 100 daily.

- Inference Time: All models are hypothetically deployed on A100 80GB, taking 6.67 seconds of processing time and a cold start overhead of 13.42 seconds.

- Scale Down Timeout: Uniformly 60 seconds across all platforms, except Hugging Face, which requires a minimum of 15 minutes. This is assumed to happen 100 times a day.

- Inference Duration:

Processing 100 queries and each takes 6.67 seconds

Total: 100 x 6.67 = 667 seconds (or approximately 0.19 hours) - Idle Timeout Duration:

Post-processing idle time before scaling down: (60 seconds - 6.67 seconds) x 100 = 5333 seconds (or 1.48 hours approximately) - Cold Start Overhead:

Total: 100 x 13.42 = 1342 seconds (or 0.37 hours approximately)

Total Billable Hours with Inferless: 2.04 hours

Scenario 2

You are looking to deploy a YouTube Video Summarizer for processing 1000 queries per day.Key Computations:

- Inference Duration:

Processing 1000 queries and each takes 6.67 seconds Total: 1000 x 6.67 = 6670 seconds (or approximately 1.85 hours) - Idle Timeout Duration:

Post-processing idle time before scaling down: (60 seconds - 6.67 seconds) x 100 = 5333 seconds (or 1.48 hours approximately) - Cold Start Overhead:

Total: 100 x 13.42 = 1342 seconds (or 0.37 hours approximately)

Total Billable Hours with Inferless: 3.7 hours

Pricing Comparison for all the Scenario

| Scenarios | On-Demand Cost | Serverless Cost |

|---|---|---|

| 100 requests/day | $28.8 (24 hours billed at $1.22/hour) | $2.49 (2.04 hours billed at $1.22/hour) |

| 1000 requests/day | $28.8 (24 hours billed at $1.22/hour) | $4.51 (3.7 hours billed at $1.22/hour) |

Please note that we have utilized the A100(80 GB) GPU for model benchmarking purposes, while for pricing comparison, we referenced the A10G GPU price from both platforms. This is due to the unavailability of the A100 GPU in SageMaker. Also, the above analysis is based on a smaller-scale scenario for demonstration purposes. Should the scale increase tenfold, traditional cloud services might require maintaining 2-4 GPUs constantly active to manage peak loads efficiently. In contrast, Inferless, with its dynamic scaling capabilities, adeptly adjusts to fluctuating demand without the need for continuously running hardware.